The Algorithm of You: How AI Programs Human Behavior

How AI Programs Human Behavior

Do you know that Facebook knows you better than you know yourself?

It’s a fact. If you are like most users, you have given a lot of “likes” over the years, haven’t you? How many? A few thousand? Bad news. You handed over your free will to the devil of algorithmic behavior modification.

Behind every piece of content we see is a manipulative AI algorithm that seeks to modify our behavior in some way.

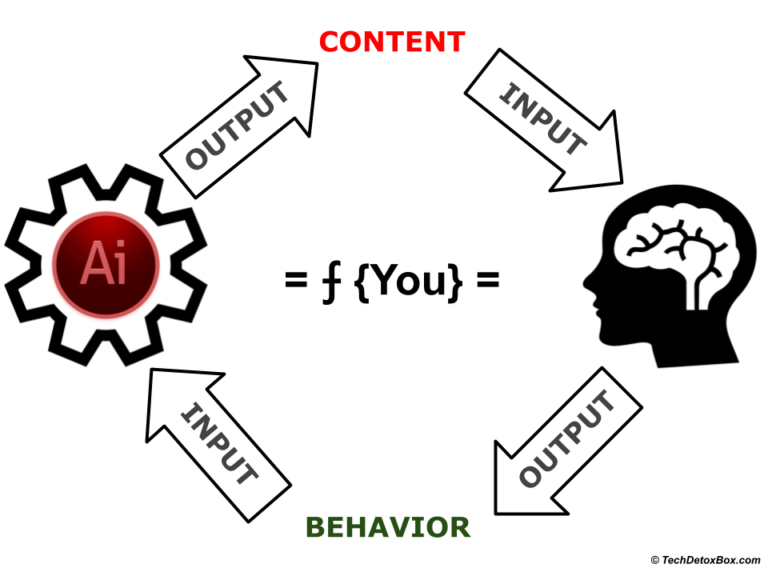

The Algorithm of You can program your behavior for any outcome.

A study on the predictive power of Facebook Likes found out that “Likes” are enough to accurately predict extremely sensitive personal information that the users might prefer to keep to themselves: sexual orientation, ethnicity, religious and political views, personality traits, intelligence, happiness, addiction, parental separation, age, and gender.

How is this possible? By placing free surveys in the feeds (everyone is curious about THEMSELVES!), researchers had users complete detailed personality assessments and provide their demographic data: basically, what is TRUE. In addition, users’ friends and family also provided insights about their personality. This data became a benchmark to test if the algorithm can accurately predict the SAME social and psychological characteristics just from the data on Likes.

And guess what.

- IF YOU HAVE GIVEN 150 LIKES, FACEBOOK KNOWS MORE ABOUT YOU THAN YOUR BEST FRIENDS.

- IF YOU HAVE GIVEN 250 LIKES, FACEBOOK KNOWS MORE ABOUT YOU THAN YOUR MOTHER.

- IF YOU HAVE GIVEN MORE THAN 300 LIKES, FACEBOOK KNOWS YOU BETTER THAN YOU KNOW YOURSELF.

The researchers had stated that it would be highly unethical to use such deep psychological insights obtained so easily from millions of users without their knowledge for any form of influence on users’ behavior.

Yet, this is the very description of the business model behind social media.

The feeds we see on our screens are not random or neutral: it’s a targeted plan of attack with personalized content custom-picked to nudge a particular human toward a particular behavior.

Ordered by the real customers of the platform – be they advertisers or terrorist recruiters. You are not the customer. You are the product.

Any paying customer of Facebook can do it, and it’s not expensive. According to the Center for Humane Technology, the attention of an entire nation can be purchased for the price of a used car.

AI Does Not Have Human Limitations

Humans have always tried to influence each other. If I want to borrow money from you, or convince you to join a PTA, I can smile and try to be nice. I am not sure it would work though. My ability to convince you is limited by only a few variables I know about you: my knowledge of your character, the history of our previous relationship, me guessing if you are in a good mood or not. Some of these are not even accurate facts, but preconceptions and stereotypes that I formed about you.

Plus, I have my own human issues – I could feel particularly insecure today, maybe I had a bad night sleep, I am introverted and shy, I picked the wrong outfit, used the wrong tone of voice, or messed up my makeup – a thousand other things that will render me ineffective.

Ultimately, your decisions are up to you. You maintain your free will.

I can only guess what motivates you to act. I cannot accurately estimate my chances of influencing you.

But Artificial Intelligence can. AI does not have our human limitations.

The Algorithm of You operates with thousands – maybe millions – of variables that it collected about you.

Variables from your entire digital footprint across all the apps and devices you ever used. This data is aggregated into a massive database that contains everything there is to know about you. The more you did online, the larger your digital footprint.

Also, your digital history goes back years – and the longer it is, the more AI knows about you. As credit history is predictive of your financial behavior, your digital history is predictive of ANY BEHAVIOR.

If there are gaps in the data, AI fills them with information about people who are JUST LIKE YOU. That’s an easy task: it knows who your social media friends are.

Your digital profile contains your exact pressure points. If pressed in the right way at the right time, the desired behavioral outcome is almost inevitable.

It’s not a guess of 50/50. It’s the certainty of 90/10.

You are not consciously aware what these pressure points are. The machine builds a Function of You that modifies the outcome – your behavior – with precision. You comply without thinking.

This is not advertising. This is mind control.

Behavior Modification: the Function of You

Let’s consider a hypothetical example of how a function of behavior modification might look like. Let’s say, the customer of a social media platform is an advertiser who wants to make you buy a piece of jewelry. The content you would see in your social media feed would press your psychological buttons found in your digital footprint with a calculated precision:

⨍unction of Behavior Modification =

0.X * Insecurity +

0.X * Mid-Life Crisis (look younger!) +

0.X * Envy of better-looking social media friends +

0.X * FOMO (everybody buys these, what are you waiting for!) +

0.X * Guilt to refuse to buy an item recommended by a friend +

0.X * Anger at husband (I’ll show him!) +

0.X * Subliminal messages (celebrity photos wearing jewelry) +

0.X * Political Unity (product ads shown next to the political content you agree with) +

0.X * Urgency to buy (sale!) +

0.X * Desire to save (coupon!) +

0.X * Scarcity (supplies are running out!) +

0.X * Social pressure (your friends like this product!) +

0.X * Charity (we donate to a good cause!) +

0.X * Narcissism (you deserve it!) +

0.X * Buying prompt timed to a moment of weakness +

a million other variables…. =

= Behavioral Outcome: User Buys a Piece of Jewelry

The process can be repeated to program any human to achieve any behavioral outcome with a formula UNIQUE TO THEM.

The Algorithm of You is a Psychopath

It’s not as simple as personal trait A causes behavior B. What happens at the intersection of brain neurobiology and machine intelligence is incredibly complex. AI finds intricate relationships between your multitude of variables, traits and insecurities you have no idea you have. A human analyst, even the one working directly with your data, may not notice these connections – they are not obvious or intuitive. Some do not make sense. But the algorithm will find correlations that work.

Even the creators of the algorithms are not clear how this happens. Some of the correlations the machine finds in the data cannot even be defined as a logical variable by a human programmer. Youtube creators have been surprised that their algorithm somehow drives people to the dark content and conspiracy theories – all for the sake of “engagement”.

The attention engineers may not know why it works, but the machine does.

Maybe it’s watching a particular YouTube video that led you to a recent Amazon purchase. Maybe seeing an insurance commercial next to certain celebrity gossip is predictive of your voting behavior – but only if you are a middle aged white female. It can be anything. Machines run millions of “split-tests” to find out if you are more likely to click if the ad or a political campaign is presented, say, with a pink vs blue background. You are not aware of these weird quirks in your subconscious mind – but AI knows they are real.

The algorithm knows you better than you. It has crawled all over your subconscious mind – revealed by your data.

The Algorithm of You never sleeps, it is never tired. It does not have feelings. It’s not concerned with empathy and compassion. It does not hesitate if its persuasion tactics are right or wrong, good or evil – only if they are efficient at achieving the goal.

In other words, it does not have any ethical parameters built in. It’s not human. The Algorithm of You is only optimized for the outcome – behavior modification:

- Make you buy.

- Make you vote.

- Make you donate to a cause.

- Make you into a gaming addict.

- Make you go participate in riots.

- Make you into a terrorist.

- Make you surrender your free will in every area of your human existence.

- Make you give up more data to optimize the algorithm further.

The Algorithm of You is a psychopath.

When the highest bidder offers a price for modifying your behavior in any way, it can be done. Sometimes with over 90% accuracy – depending on the situation, AI can be that confident that you would behave exactly how it wants you to behave.

It’s a matter of pressing just the right psychological buttons in your brain. Feeding you the right information at the right moment of weakness. Making you feel angry or depressed – and pushing you into the behavioral outcome the Function of You is programmed for.

Remember the scene from the Social Dilemma documentary where the protagonist is brought back into the trap of his destructive digital addiction? Manipulative AI algorithms custom-picked a notification exploiting the character’s greatest weakness. All it took to “engage” the user was showing him: “Your ex-girlfriend is in a new relationship!”

It all happens below the level of our conscious awareness. You think you are making your own choices, when in fact you are being manipulated with precision.

Your well-being is not the programmed outcome of the Function of You. Only user engagement and platform profitability. This is a story as old as time – just follow the money.

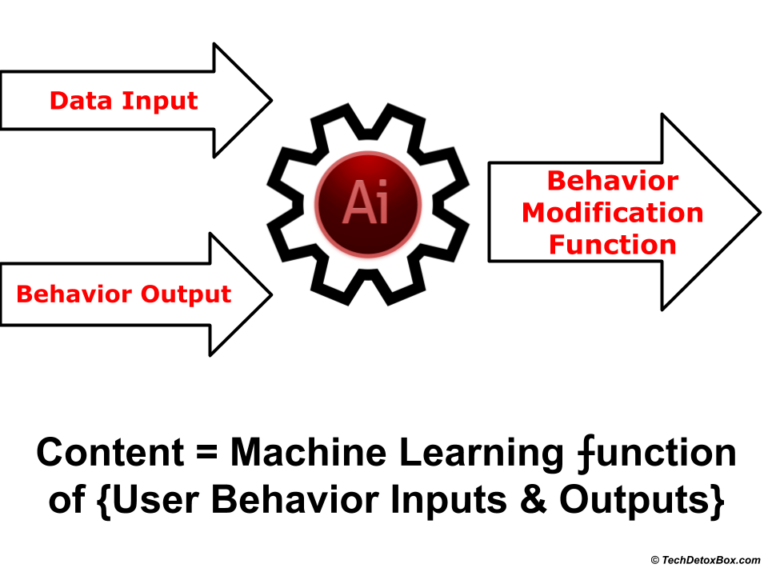

Programming of Behavior Modification Function

The Function of You is programmed inside the machine learning black box. The algorithm is fed with 2 things: the Data from your digital footprint and the task to achieve a desired behavioral Output in a human. It then comes up with the best Function to achieve the outcome.

The Function of You dynamically adjusts in real time to bombard the user with subliminal messages until the desired result, a change in behavior, is achieved. The algorithm is self-optimizing. It means it gets better at calibrating your behavior over time as it acquires more data about you.

By giving the platforms our data in the course of our everyday digital activities, we are training the algorithm (for free!) how to manipulate us more efficiently. It’s called Machine Learning, because, well… it learns.

It’s not fiction, it’s reality. The same process was used to train Google Translate – an incredible amount of text in different languages was fed into the machine, and the algorithm figured out the complex patterns in the languages, making human translators all but obsolete. No linguists were involved.

Jaron Lanier in his book Ten Arguments for Deleting Your Social Media Accounts Right Now calls the process of influencing the user with targeted content Algorithmic Behavior Modification, and the companies that engage in it, the BUMMER machine: Behaviors of Users Modified, and Made into an Empire for Rent.

We have talked to our friends online, shared our opinions, clicked on some links – and in doing so have unknowingly given the algorithmic behavior modification machine tools to manipulate us.

AI combines our unique data with the set of evolutionary cognitive biases that are the same for all humans.

The resulting formula is a psychological weapon used against us. We did not sign up for this. Yet, this is what the attention economy of surveillance capitalism is based on:

The obvious ethical objection to this business model is the fact that human mind control for the benefit of unknown third parties is dangerous. It is destroying human dignity, free will, and the very fabric of our society.

A Way Out of Mind Control

The breakdown of humanity and civil discourse has nor been planned by an evil genius. Algorithms have no conscience: what happened was collateral damage. Side effects – like the fine print on medications, only unexpected and undisclosed.

But unlike medications, digital products can be redesigned and tweaked to minimize negative side effects of technology as they are discovered.

What shall be done to solve the problem? Here is a business opportunity for tech developers: use Machine Learning to develop a filtering algorithm that optimizes all incoming content for users’ benefit and wellbeing. It can be done by giving AI a different outcome to optimize for:

User happiness instead of user engagement.

AI would then tweak the parameters in the Function of You away from just efficiency and profitability and maximizing screen time to a more sustainable use…of the user. Who is the most valuable resource, after all.

- Ads are blocked – unless they are relevant to the user’s wellbeing.

- Political brainwashing in the echo chamber of identity politics is replaced with balanced news from across the spectrum.

- Social media feeds are optimized for positivity, not envy and comparison.

- Personal Data belongs to the user, not to the all-seeing eye of surveillance;

- But wait – would not all this make the user even more addicted to the improved digital diet? Perhaps. Natural stopping points would be a part of the interface to remind people to take a break and attend to the real world.

The users will be happier, stop freaking out, trust the platforms more, and maybe will not delete their Facebook and Instagram accounts.

And the world would be less insane than it is today.

Take Back Control

Sign up for our monthly newsletter to receive latest digital wellbeing research and screen time management solutions. We never share your email with third parties.Share this:

- Click to share on X (Opens in new window) X

- Click to share on Facebook (Opens in new window) Facebook

- Click to share on LinkedIn (Opens in new window) LinkedIn

- Click to share on Pinterest (Opens in new window) Pinterest

- Click to email a link to a friend (Opens in new window) Email

- Click to print (Opens in new window) Print