Digital Wellbeing

Become Your Own Attention Engineer: How to Hack Bad Digital Habits with Behavior Design

What can we do if we feel helpless against our Facebook addiction? Our Instagram obsession? The unhealthy amount of time we spend scrolling through news feeds or playing video games? Devoting hours to our phone while neglecting our productivity, health, sleep, and relationships?

How can we break bad digital habits that have taken over our lives?

It’s not our fault. It’s not our lack of self-control. It’s not our children’s character flaw or our bad parenting. It’s a moral failure of the industry that is built on extracting human attention regardless of the human cost.

The problem is that Big Data knows more about our deepest psychological insecurities than we know ourselves.

Attention engineers behind social media, video games, and other digital tech use the science of behavioral psychology to design their products for maximum addictive potential. Our collection of cognitive biases is being used against us to take advantage of the weaknesses in the human brain.

What if the same knowledge of human habits can be harnessed to limit the addictive grip of technology on our lives? It turns out that it can. For all of us struggling with screen time, this is great news. Behavioral psychology can be used both ways: not only to build addictive digital products, but also to protect our well-being from overusing these products. Same science – different outcomes.

How can we become our own “attention engineers”, take control away from the tech industry, and build good screentime habits instead? Enter the art of Behavior Design.

We can hack our own psychology, and be better at it than the machines.

Behavior Design and the Attention Economy

Over the last few years our love affair with technology has gone past the honeymoon stage. Negative side effects of screens became an open secret. For decades we have been fascinated only with the benefits of technology, and no one gave much thought to the downside. But as more research keeps coming out about adverse side effects of screen time – from reduced attention span to compromised productivity, social skills, physical and mental health – it’s getting clear that just about every traditional human metric is compromised by digital media overuse.

Parents are concerned about children growing up emotionally fragile, anxious, socially inept, and totally unprepared for life. The language of wide-eyed fascination with technology has been replaced with warnings of addiction. Unhealthy digital habits consumed the entire society, children and adults alike.

Is it time to panic yet?

With the release of The Social Dilemma documentary by the Center for Humane Technology awareness of the problem went mainstream. Now almost everyone knows that something went terribly wrong at the intersection of technology and human well-being.

The merger between psychology and technology occurred without people noticing.

Sean Parker, first president of Facebook, famously said: “The thought process that went into building these applications, Facebook being the first of them…was all about: “How do we consume as much of your time and conscious attention as possible?”…exactly the kind of thing that a hacker like myself would come up with, because you’re exploiting the vulnerability in human psychology.”

The amount of attention is fixed by our biology – it is limited to 24 hours a day, minus the time we decide to sleep. Therefore, this scarce resource becomes ever-more valuable as the amount of information competing for our attention expands exponentially. Where attention is, there is money – that’s why it’s called the “attention economy”.

Which results in an ethical dilemma for the digital media companies that are tempted to exploit human psychological weaknesses to divert our attention to their platform, away from other platforms, and away from other competing non-digital activities. As Netflix’s CEO said: “Sleep is our competition”.

The result is our dysfunctional relationship with technology, with most “users” blaming themselves for being “addicted”, using language that should be reserved for drugs.

The work of attention engineering is called UX (User Experience) design, and it is optimized for maximum user engagement. Which translates to maximum time on the screen. Which in turn translates to maximum profits. It’s a vicious cycle.

Hack the humans – or go out of business. Technology had to hijack our evolutionary biology.

To develop addictive digital products, tech companies learned the works of human nature from the best behavioral psychologists and neuroscientists. They applied what they learned to make products like Instagram and Facebook that tap into deep evolutionary biology of social validation. Products like multiplayer video games that create a sense of purpose for addicted gamers who cannot find it in the real world. Products like Twitter and YouTube and TikTok and Snapchat and thousands of others that provide the user with a never-ending stream of digital “random rewards” and have the same addictive potential as slot machines at the casino.

Every successful digital product is based on the principles of behavioral psychology. Of course, they bring a lot of value into our lives – otherwise we would not use them. But in addition to solving our problems, the attention economy finds – and exploits – human weaknesses. As unethical as it is, that’s the business model behind trillions of dollars in market value of the biggest tech companies. And it’s unlikely to change any time soon.

Behavioral psychologists took a hit to their reputations after it became known that their science had been digitized for profit into products that were supposed to improve – but in many cases were ruining people’s lives. It is almost impossible to enjoy the benefits of Facebook for 3 hours a day without experiencing adverse side effects of Facebook: feeling inadequate, anxious, isolated, and unproductive. The coin has two sides.

Rampant tech addiction could not – and should not – be blamed on psychologists though. Their knowledge of human nature was always meant to serve human well-being, not destroy it. The science of psychology was misapplied.

Doctors have to take the Hippocratic Oath and swear to “do no harm” to the patient. User Interface (UI) designers of the Big Tech so far have no such ethical limitations and are free to manipulate the user’s brain for profit disregarding the side effects: smartphones, social media, and video games taken in large doses can be devastating to productivity, relationships, physical and mental health. Nasty surprise.

Behavioral scientists never meant it to be that way.

They have raised ethical concerns that go with influencing human behavior as the science was being developed. BJ Fogg, the founder of Behavior Design Lab at Stanford University, is considered the creator of the field of persuasive technology. He wrote extensively about ethical considerations back in 2002 in his book Persuasive Technology: Using Computers to Change What We Think and Do – all before Facebook and smartphones and iPads came into existence. The warnings were there: technology is powerful. Handle with care.

When behavioral scientists like BJ Fogg taught future technology innovators, they warned them about ethical implications of applying technology to manipulate human behavior. But the tech leaders did not have the time to focus on the potential side effects. Ethical parameters would only limit the algorithms that were making billions.

They were too busy innovating, and too successful to stop. They believed in the beauty of technology and concentrated on its upside. They genuinely thought they were changing the world for the better. No one bothered to explore if there should be “small print” side effect warnings to go along with the wondrous new tech drugs.

We can find some warnings today in the legalistic language of terms and conditions – but only about privacy, not psychological harm. No one reads them. They are not fun compared to actually using the app.

Maybe by now behavioral psychologists feel similar to nuclear scientists. Nuclear power can be made into a bomb that kills thousands, or used to produce clean energy and improve lives. In the same fashion, the power of technology can be harnessed to enhance human well-being – or destroy it, depending on the use.

Scientists behind the atomic bomb were not evil people. What did they feel about their creation after Hiroshima? “Woe is me.” – said Einstein after he heard of the explosion. Some of the creators of digital technologies feel similar pangs of conscience today: “What have we done?” Technology they created proved to be powerful – and often destructive – beyond anyone’s expectations. A force has been unleashed on the world, and it does not look like it will be contained any time soon.

Some tech insiders, like Sean Parker or Tristan Harris, actively speak out and become conscientious objectors. Some are more quiet, flying under the radar by sending their kids to tech-free schools, tech-free summer camps, and limiting screen time at home. They know exactly that the user interface of digital products was based on the vulnerabilities of the human brain, and would rather not have their own kids manipulated that way, thank you very much.

We cannot wait for the government to catch up with regulations. The companies themselves will not volunteer to change their business practices of extracting human attention at all costs – as long as it’s legal, they are in compliance with the law.

If we are to protect ourselves from the adverse effects of addictive technology, our best chance is to use the same weapon that was used against us.

When we face persuasive design tactics that hack our humanity, we can disrupt these tactics with the help of Behavior Design. Digital media creators learned about your brain to get you addicted to their products, but when you take control back to craft your own environment of limited screen time, you win at their own game.

Where do we start? How do we hack the psychology of self?

Fogg Behavior Model

BJ Fogg’s work inspired many persuasive design techniques used to build successful products like Instagram, whose founder was among Dr. Fogg’s students. Who is better to teach people how to overcome the irresistible pull of these products?

Behavior Design has been applied to create addictive technologies, but now it can become a shield of protection against these screen time weapons.

Professor Fogg’s Tiny Habits method, outlined in his 2020 bestselling book Tiny Habits: The Small Changes Than Change Everything, can help people eat healthier, exercise more, be more productive – and less addicted to screens.

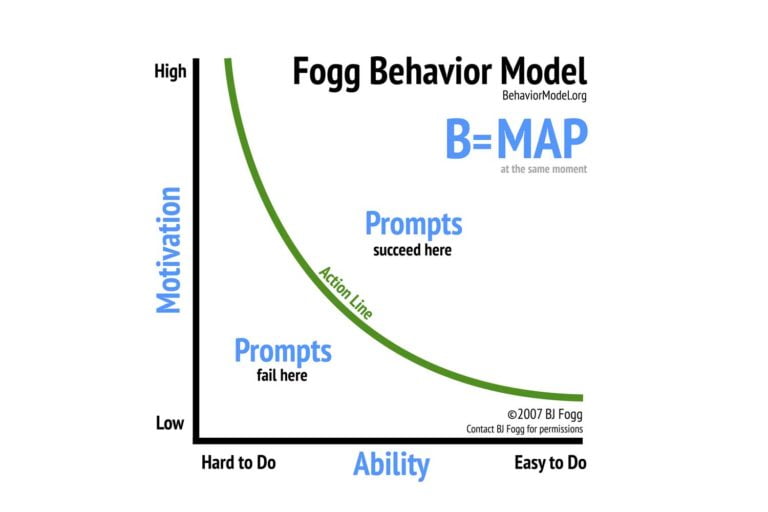

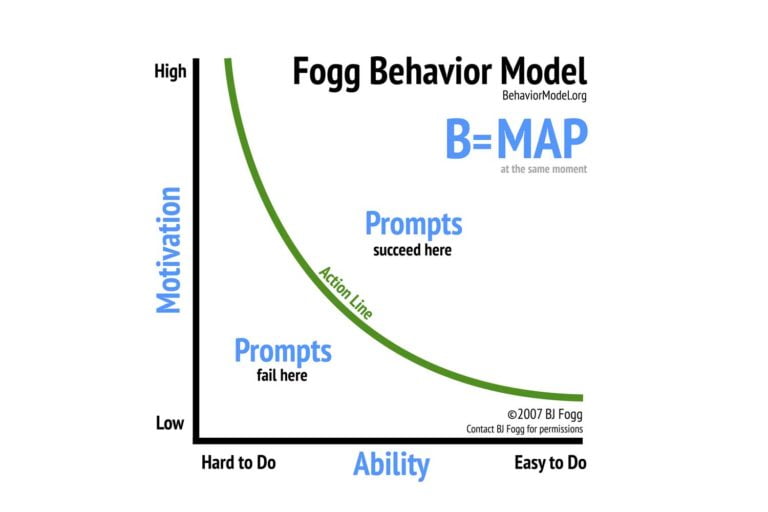

According to the Fogg Behavior Model, behavior happens when three elements align at the same moment:

Behavior = Motivation + Ability + Prompt

Source: https://behaviormodel.org/

Prompt, Ability, and Motivation are the ingredients of every habit, good or bad. The Fogg Behavior Model is behind many successful apps on our screens. We can use the same model to redirect our focus to better life habits. Away from Instagram.

By taking charge and controlling Motivations, Abilities and Prompts we can create healthier screentime habits for ourselves, and break digital addictions that compromise our well-being.

Remove the Prompt

When trying to remove bad digital behaviors like spending too much time on the phone, motivation or ability is not where we should start. Instead, Dr. Fogg recommends dealing with the prompt first – which is usually much easier.

Digital media creators invested a lot of money and effort into designing what behavioral science calls context prompts. Notifications are a prime example.

Notification is a call to action that catches your attention on the screen and demands that you deal with it – now! It’s a buzz from your phone. It’s a red circle notifying you of the new activity within an app. It’s a pop-up message. A click-bait news headline. It’s anything that distracts you from what you are doing at the moment.

If you want to beat the habit of grabbing the phone, you need to undo the prompt designed to get you to act. Disabling notifications interrupts the interface of the prompt.

BJ Fogg writes: “We may never find a perfect way to stop unwanted prompts from companies with business models that depend on us to click, read, watch, share, or react. This is a difficult problem that pits our human frailties against brilliant designers and powerful computer algorithms”.

It’s up to us to remove the prompt, avoid the prompt, or ignore the prompt.

We want to be notified when an actual person is trying to reach us, when we have an important appointment, or need to remember a loved one’s birthday – these are all good prompts. But our life is crowded with a flood of notifications we don’t really want, that add no value but only hijack our attention. Do you really need a reminder from Candy Crush that you have not played in a while?

Disrupting the constant interface of notifications and reminders will become more important in the future as machine algorithms learn to bombard us with targeted behavioral prompts designed to be impossible to ignore. As people learn to resist traditional notifications, these new supercharged prompts will be customized by AI for your individual situation to make sure you pay attention, even against your will.

Prompts will be psychologically powerful and timed precisely to your weakest moment.

A perfect example is the scene from the Social Dilemma documentary where the main character, who made a pact with his mother to stay off the phone for a week, accidentally sees a notification chosen by the algorithms to get him back online:

“Guess what – your ex-girlfriend is in a new relationship!”

He exclaims: “You gotta be kidding me!”, grabs the phone and once again becomes the victim of evil AI manipulation:

Hack Ability

Prompts trained our brains to respond automatically, without thinking. The more apps, the more they pester us with prompts. BJ Fogg recommends redesigning our ability to engage in bad digital habits by limiting apps’ access to our attention. By making a habit more difficult to do.

In Tiny Habits Dr. Fogg introduces the concept of an Ability Chain: Time, Money, Physical Effort, Mental Effort, and Routine. Each element makes the habit easier – or harder to do.

If you want to break a habit – break the ability chain.

Here is how we “break an ability chain” for our kids’ screentime in our family:

Time: Devices are only made available for a limited time of the parents’ choice.

Money: We do not charge the kids for using their iPads, but homework and chores need to be done before access is granted.

Physical effort: Kids have no smartphones. Other devices are either password-protected or physically locked up, including the TV remote. There is no tech in the bedrooms.

Mental effort: No self-control is required to resist the temptation of screens, because the parents made doing the right thing the only option.

Routine: Evening screentime is interrupted by important routines – family dinner and sports.

We engineered our kids’ environment for limited screentime. The ability of unauthorized screen use is just not there. It might be a simple approach, but it works. But as adults we have to do the same for ourselves, which is a lot harder. We are in charge of our ability to engage in digital habits.

Behavior Design would have us brain-storm how to reverse-engineer a bad habit by looking at the links of the ability chain that enables the habit and picking the easiest part to break.

The obvious ability hack is simply removing social media and gaming apps from your phone. Hack your ability to access them easily on the go, instead use them on another device at the time and place of your choosing, disrupting the interface of continuous user engagement. Now accessing these apps would require more Time, more Physical Effort, and a different Routine, breaking the habit of mindless scrolling. Remove the biggest offenders: YouTube, Netflix, Facebook, Instagram, video games – these apps are responsible for the majority of time we are wasting on our phones.

A person who wants to conquer their social media habit might add Mental Effort by inventing very complex passwords for their accounts and disabling automatic login. They could limit the Time available for the habit by taking advantage of phone screentime settings to cap the use of particular apps. They could break an ability chain by adding phone-free Routines to their schedule – like device-free family dinner or a walk – with the phone left behind.

The very presence of a phone ensures that we will be perpetually distracted. It’s like a needle in your vein, steadily dripping the digital media agenda directly into your system. After all, user engagement depends on the presence of the screen, and if it’s always by your side, there is no escape. Even if you silence your phone, you can still glance at it and see notifications, which create a psychological storm in your brain and force you to react to them.

A simple action of putting some distance between you and your phone – placing it in another room with the sound off – can give you back hours of productive work. Or a peaceful night’s sleep.

Hack Motivation

Motivation to change our bad digital habits is the hardest ingredient of the behavior. The first challenge is becoming aware of what we are actually doing. To recognize that we might have a problem with technology. We need to make our automatic habits a subject of conscious awareness in order to control them. Carl Jung said:

“Until you make the unconscious conscious, it will direct your life and you will call it fate”.

What is unconscious is uncontrollable by definition.

However, giving people the right information is not enough to change behavior. People know junk food is bad for them but keep eating it. Smokers know about lung cancer but keep smoking. Public awareness campaigns about screentime harms have been great but have not moved the needle yet. We all know the phones are addictive but cannot put them down.

One would think that once people recognize they are being manipulated for profit, they would be equipped to resist, to say no. But it’s not that easy. People who are addicted to anything are well aware the substance or behavior is not good for them, but knowledge alone is not enough to overcome a hard-wired bad habit.

We intuitively know that 12 hours a day on the screens is harmful. We feel guilty and frustrated with ourselves. We procrastinated on our work projects. We missed a workout. We paid no attention to our friends and family. The motivation to change our screentime habits is there – but motivation alone is not enough. When we try to change our eating habits, all the motivation in the world may not help us if we find ourselves in the presence of freshly baked chocolate-chip cookies.

We have to design our environment to make the right thing easy to do – and the wrong thing hard to do. Cookies should not be in the picture.

Motivations are very unstable, subject to temptations and internal conflicts. They come and go – just think of New Year’s resolutions! In our moments of weakness our motivation to do the right thing (concentrate on work) drops below the action line in the Fogg Behavior Model – and we procrastinate. While our motivation to do the wrong thing (be distracted from work) peaks above the action line – and we spend hours scrolling on social media instead.

Our desire to decrease screen time may also not be as strong as our deep psychological motivation for connection and belonging, exploited by social media to keep us on their platforms. Such motivations are hardwired in our brains by evolutionary biology, and they are not going anywhere. We want to stay in touch with our friends and family. Grandma wants to see pictures of her grandkids, especially if they live far away. Teenagers want to be liked by their friends. Popularity and status obtained through likes, shares and followers are a hallmark of success. We are addicted to each other.

BJ Fogg writes: “LinkedIn has invested a lot of time and money to tell you that 233 people have looked at your profile and you need to click to see who they are”. You are curious and flattered by all the attention. It’s hard to resist. By design.

With such powerful conflicting motivations, “reduced screen time” is just too abstract of a goal. It’s not going to happen until it is supported by specific small behaviors that we can realistically get ourselves to do, and that are effective at solving the problem – BJ Fogg calls these “Golden Behaviors”. The example would be switching the phone into airplane mode for the time you decided to devote to work. Easy to do – and effective.

Where can we find Golden Screentime Behaviors?

Screentime Reduction Project

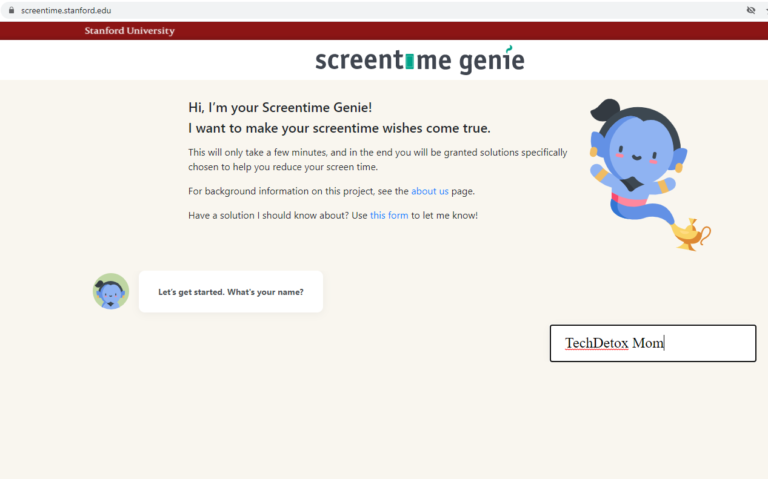

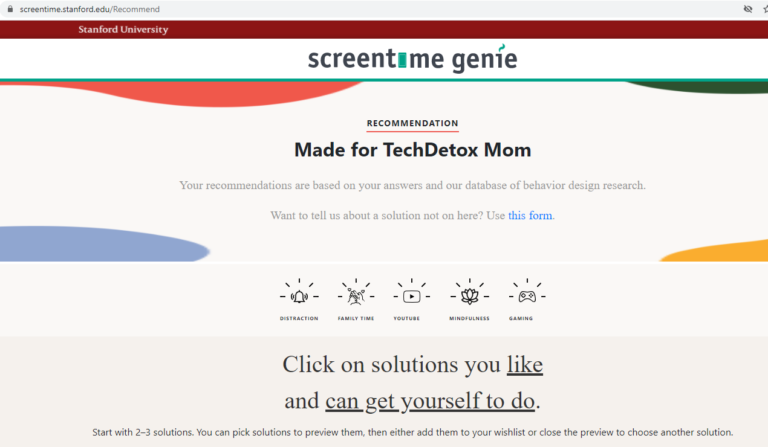

To help people solve their screen time problems, Stanford Behavior Design Lab launched a Screentime Reduction Project and compiled the world’s largest database of methods for reducing screen time into one online tool that can be found here:

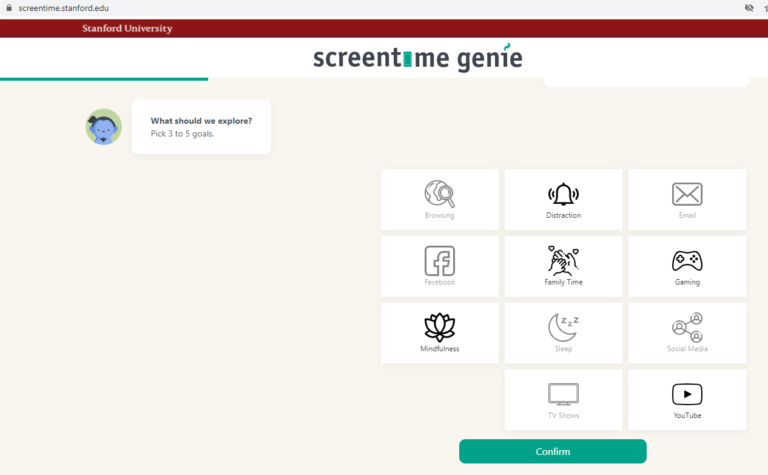

There is a really cute “Screentime Genie” character that asks you what platforms you use and what are your biggest screentime challenges. It then recommends custom solutions that fit your situation. It’s very simple to use, takes only a few minutes, and leaves you with actionable steps to improve your relationship with technology.

Here are the steps I went through for myself:

Step 1: Screentime Genie

Step 2: Pick your screentime goals

I chose Mindfulness, Distraction, and Family Time. And two more that looked more like problems: YouTube (me) and Gaming (my kids).

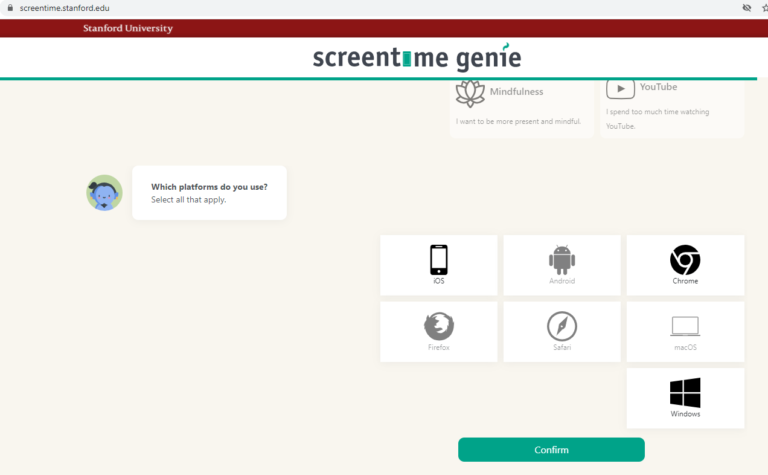

Step 3: Choose your platforms

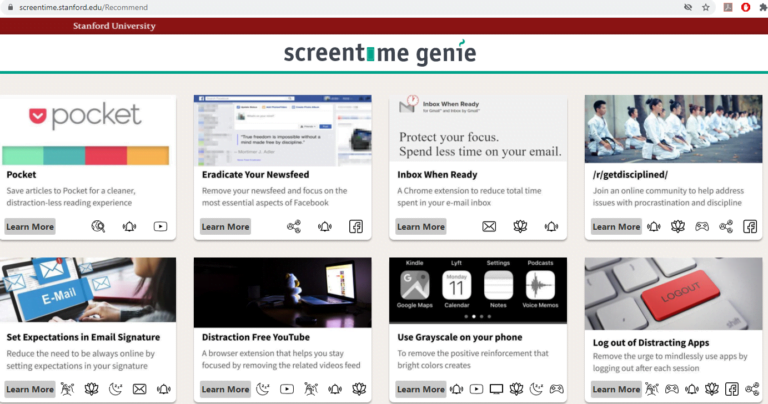

Step 4: A list of solutions - behavior changes and screen time products

Step 5: Explore each solution

Step 6: Decide What You Can Get Yourself to Do

Decide in accordance with Behavior Design principles, which solutions you LIKE and CAN GET YOURSELF TO DO. And start DOING them. These would become your Golden Screentime Behaviors.

Maxims of Behavior Change

In the Tiny Habits, BJ Fogg summarized fundamental principles behind behavior change into Fogg Maxims:

#1. Help people do what they already want to do

#2 Help people feel successful

This is the best thing about Behavior Design: behavior change is supposed to be easy and it is supposed to feel good.

We can change our habits in tiny ways, and feel great about it. It could be our best line of defense – given to us by the same scientists whose work was used in developing addictive technologies. Replacing bad digital impulses that were intentionally “built-in” into us as an automatic response – such as constantly checking the phone – with good digital habits of OUR choice.

All of our big bad screentime habits started small: social media, online shopping, video gaming, obsessive news feeds scrolling. Tech platforms behind these habits – Amazon, Google, Facebook, Instagram – all started small too and grew when their products became hardwired as habits.

Digital wellbeing can also start small – and grow bigger to help us flourish.

Share this:

- Click to share on X (Opens in new window) X

- Click to share on Facebook (Opens in new window) Facebook

- Click to share on LinkedIn (Opens in new window) LinkedIn

- Click to share on Pinterest (Opens in new window) Pinterest

- Click to email a link to a friend (Opens in new window) Email

- Click to print (Opens in new window) Print